Release Date:

11 - 2000

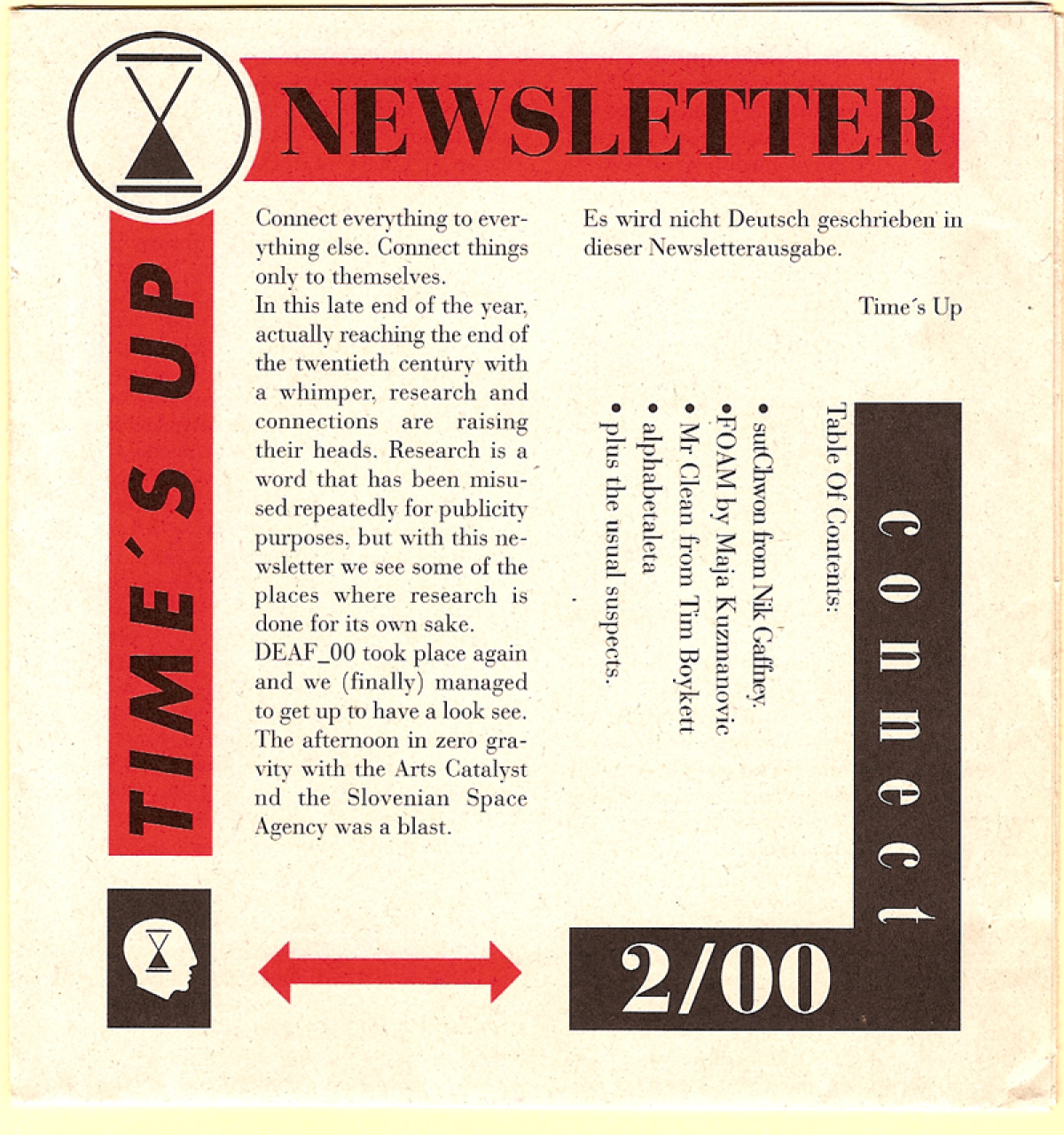

Newsletter 02-00 Connect

2-00 Connect

#

introduction

Connect everything to everything else. Connect things only to themselves.

In this late end of the year, actually reaching the end of the twentieth century with a whimper, research and connections are raising their heads. Research is a word that has been misused repeatedly for publicity purposes, but with this newsletter we see some of the places where research is done for its own sake.

DEAF_00 took place again and we (finally) managed to get up to have a look see. The afternoon in zero gravity with the Arts Catalyst nd the Slovenian Space Agency was a blast.

Es wird nicht Deutsch geschrieben in dieser Newsletterausgabe.

#

editorial

How much news can a newsletter carry? More or less enough. Late 2000 and the plans are beginning to sprout. The year’s slog has begun to pay off, and the possibilities for new projects are sprouting like mushrooms after a spring storm. On the backside you can read about Nik Gaffney’s plans to connect everything to everything else, an admirable aim, and a result of the ponderinges that surrounded CTL2000 like a memetic sporecloud. An introductory text about FOAM, a laboratory attached to a larger long term research facility in Brussels, introduces another lab with conceptual ties to the Times’ Up Research Department, with subtle flavoural differences. Some other texts touch upon where we are heading, but in summary it all comes down to connections - there are too many people doing too many interesting things for us to remain still for long, and although time is on our side, we need and desire our collaborations to add spice to the mix. I daresay more connections are imminent, and look forward to hearing about them.

#

Mr. Clean

The idea of the bodies immune system as an intelligent, learning system goes back through the annals of biocybernetics. Our immune sysystem is educated through experience, the school of immunisation / vaccination, the breast milk of our mothers. The hyperactivity of the immune system is the source of allergic response, the immunilogical equivalent of illiteracy is the curse of those brought up in modern hypersterile environments.

The immune system as genius is the system that responds exactly but not too far, attacking pathogens, recognising self. Somehow appropriate intelligence, moderation in all things.

At the DEAF_00 symposium, Eugene Thacker (vector through Prema Murthy/Fakeshop) discussed various ideas orbitting around the platonic points of the immune system, stemcell healing, tissue engineering and disposable body parts (“Don’t worry, I’ll just grow another one!”). He talked about a possible future where a preventative practice was possible - a scenario where our immune system was “predictive and hyperresponsive,” healing damage before it was even done. In this scenario the immune system, in contrast to its streetwise experience based brother is the university titled daughter with the title of genius.

A while ago, in the aftershock of the early nineties smartdrug memespace infection, I penned a small story with a thread about drugs that would not improve the brain as much as the body. And not the teeth grinding speed burst or muscle pumping steroid usage, but cleverly produced drugs that would clean the body of the insgestor, de-scunging the neural surfaces, polishing the liver. Thus leaving the user feeling cleaner, better, a bright enamel stove all clean for the impending party. With this tablet treatment, swallowing bundles of nanobot cleaners (although the speculative mechanism remained, by necessity, unclear) would lead to a wonderful clean feeling with no comedown: there are no toxic residues to be removed, no exhausted braincell centers. Such a substance would be as addictive as heroin - but only psychologically.

But what happens when there is nothing for the cleaner to clean. Digging deeper into the cupboards, the antique family silver is polished, the underwear is ironed. Old hankies are sorted and the older ones disposed of. And there’s the rub. Things start disappearing in the ever cleaner household, not matching the escalting cleanliness expectations of the staff. We are no longer good enough, the polishing and purging begins to locate substandard specimens of nerve fibre or muscle tissue and slowly disposes of the garbage. We ourselves are not up to scratch.

A hyperreactive immune system lies close to the enthusiastic and bored policeman worrying about possible problems with itinerant bands of youths or strange collections of untidily attired intellectuals. Unable to distract themselves with car chases or burglaries, they resort to befalling parked cars with roadworthiness inspections or identity checks of romantric couples. Probably something we need about as much as a perfect body.

#

alphabetaleta

Dear Alpha!

Sorry I haven’t written for so long. A combination of work and procrastination denied it... now I write you after some further attempts to upload Mr Ankunft’s conscious being into the computer-system. Unfortunatly, he was not very well this summer, so we had to postpone the experiments until these weeks. He did not eat very much and acted very peculiar. He showed a dire need of love (especially in the morning) at the same time he lost his interest in nearly everything. A slight depression I would say, despite this never happened to him in summer. Anyway, he is well now and shows his old curiosity in the project.

The good with his indisposition is that this delay gave us the opportunity to finish the extension of the maximum amount of brain memory uploadable to the computer - this “ACTIVEMEM” extension is sort of a server that draws/collects memory throughout the net and provides it to the system-kernel. This extension should give Ankunft the ability to take into the system at least the active memories of his last 10 days in wake state ... until now it had only been possible to upload his compressed personality structure. Now he should be able to remember something (what?) of his wake live during the period of his stay in the system (very slowly for sure, we reckon that he feels somewhat drunken due to memory latency of the net).

Actually we don’t know exactly how this extension will effect the upload state, this is what we are trying to find out in our current experiments. But now we are facing some problems with the lebewesen-computer connection. The total upload and compression time in summer last year was an average 6 h 40 min. Now with the new extension it takes an estimated 20 hours to put his brain into the tin and that’s just for an 8 minutes stay. Furthermore we have to shave spots in his thick winter fur - not for his convenience these wet and cold days - all in all no reasonable conditions for the test person...

That’s the status quo in my efforts.- work in progress as they say - I think it’ll take us another week of tests and several improvements to get Ankunft into upload state, I’ll let you know then - in the meantime I hope to hear/read? from you-

Lg, Beta

#

FOAM

FOAM or advanced mysticism

foundation of affordable mysticism

further organic adaptive melting

focussed organic application mesmerism

focussed osmotic application morphology

formant of apparent morphology

formal organic advancement mystics

foundation of amplified miracles

forming organic adaptive music

fungus of applicable magnetism

foundation of aesthetic monsters

focus of aperiodic mysticism

foundation of articulate magic

focus of applicable melting

“... active exhalations work together, not to bring about some hypothetical fusion of individual beings, but to collectively inflate the same bubble, thousands of rainbow-tingled bubbles, provisional universes, shared worlds of significations.” Levi

FOAM is an association of artists, technologists and researchers, exploring novel modes and resources of cultural expression. It is conceived as a non profit organisation, based in Starlab, a multidisciplinary research institute in Brussels, Belgium. FOAM works on developing itself as a global network of cultural and scientific researchers (and their organisations). The network is aimed at sharing ideas and information on occasional meetings (workshops), as well as collaborating remotely and forming temporary projects within one of the FOAM/Starlab sites (presently in Brussels and Barcelona), or the sites of associated organisations. Becoming a network instead of a sedentary institution in one site, FOAM will keep a large amount of flexibility, which is needed for exploring manifold methods of cooperation and production.

FOAM is an ‘edge-habitat’ where discrete disciplines, individuals and realities symbiotically shape a peculiar hybrid whole. In other words, FOAM is a site of translation between arts, humanities and sciences, where the separate languages form the building blocks of the work, but which become an indistinguishable linguistic mongrel through time. This process of hybridisation is accelerated by one common denominator: the use of technology. Although all disciplines involved have used technology for centuries, it is only recently that the same tool, the computer, is used in visual arts, sonology, biology, textile and architectural design, linguistics, physics, and many more. At the present moment, the boundaries between these disciplines still exist, but are questioned and often crossed.

A common mistake in the art and technology couplings is that the artist is brought to the institute to ‘make the slides look nice’, or the scientist is asked to participate in an artistic project merely do the tech-support and system administration. This is the error we wish to avoid by creating projects that inspire the fusion of separate paradigms, that should result in a better understanding and appreciation of each other’s work. After deconstructing our practices into minuscule highly specialized fields, we are beginning to fuse them together again. FOAM’s objective is to explore the space in between the boundaries, creating new hybrid disciplines and works.

“It is probably quite generally known that in the history of human thinking the most fruitful developments frequently take place at those points where two different lines of thought meet. These lines may have their roots in quite different parts of human culture (...): hence if they actually meet (..) then one may hope that new and interesting developments may follow.” Heisenberg

(continued over)

FOAM Mission “Grow Your Own Worlds”

FOAM = re: definition of: - art as an ongoing participatory process- authors and audience as equal partners- inspiration as the research in the aesthetics of ‘the potential’- artwork as a world based on interaction and unpredictability- creativity as a solution for the collision of interests- artistic goal as the actualisation of the collective imaginary

FOAM = encouragement of :- construction of responsive (aesthetic) environments on local and global scales - participation of non-artistic disciplines in the cultural production- continuous discussion about the meaning and the role of culture in everyday life- fusion of virtual and physical worlds- unifying knowledge of separate disciplines - creation of hybrid realities that employ the advantages of science and technology for the benefit of the future communities

FOAM is process oriented, engaged in a continuous discussion about the consequences of our present actions, and rather than designing yet another series of over-designed, unnecessary ‘cultural’ artefacts, our objective is to consciously deploy technology for the well being of a prospective society.

Flexible Research Structure

In order to remain an elastic territory, where the activities can be adapted to the changes in society, technology and market, FOAM tries to encompass three temporal layers and complexities of research:

1. Short term experiments, prototypes and theoretical reflections (1-3 months) Projects that discuss or react on a ‘burning’ issue, short workshops, co-authoring of papers and articles, PR activities, small models and prototypes, brainstorming sessions for larger projects etc. This layer has most flexibility, and can be engaged in a broad range of topics, mostly in applied projects. (in 2000: multisensory narratives, cyberbotany, error + glitch, future of public spaces, collaborative tools)

2. Yearly thematic projects (3 themes in 1 year) In the beginning of every year, we will make an assessment of the most relevant questions, topics, changes or technologies that will have a strong effect on our world in the following year. Based on this forecasting, FOAM will formulate 3 fairly broad themes of interest and will select projects that will give a substantial contribution to the understanding/innovation of the 3 thematic areas. (in 2000: play, time, bio.art)

3. Long term research (5+ years) This layer of FOAM activities is focused on the slow, but more profound issues. One of the research topics is e.g.. decrease of the global bio diversity, where ethical and innovative answers often stand on the opposite sides, and fresh ideas are very much needed. The activities will involve both research and development, and will work on lasting effects for the future society. (starting in 2000: hybrid reality, genetic manipulation, networked collaboration, generative systems)

FOAM Activities An overview of the questions that form a red-thread in FOAM’s research agenda can be summarized in (but is not limited to) 5 major fields:

1. Macro Culture REALITY: What is the word reality applied to in the future? Are the ‘physical’ realms more real than the ‘digital’ ones? How do we integrate these two realities into a new, hybrid one? Do we simulate the physical in the virtual (and vice versa) or do we search for ‘real’ digital worlds that can evolve on their own terms? Can artificial realities grow, evolve and decay? The questions about the reality in which our everyday lives are embedded spread across several research fields, including hybrid architecture, generative systems, natural interfaces, tangible media, world-creating tools etc.

2. Meta Culture CONSCIOUSNESS: How do mutations in the reality around us affect the changes in our beliefs and knowledge systems? Topics as collective consciousness, consilience of knowledge, alchemy, mysticism, (cultural) nomadism, integration of science and technology, communication systems, future ideologies and epistemologies are building blocks of the ‘Meta Culture’ field.

3. Multi Culture COMMUNITY: How are the new public spheres, communities and places formed and constructed? What are the community values in networked societies? How will we organise the future societies? What is the nature of shared experience, when the collaborations and interactions become increasingly distributed across networks? What is the goal and course of action of the distributed democracy and ‘electronic civil disturbance’? Politics, sociology, urbanism, systems design, linguistics and anthropology, among others, will be the main focus of this field.

4. Micro Culture SUBSTANCE: What are the building blocks of the biological life in the future? What new sensations can we feel and how do we perceive the new dimensions of realities around us? How do we survive and reproduce? Where are our bodies situated, what is their function? How do we, as particles in a ‘cultural universe’, relate to the world? Topics as bio-technology, artificial life, biomimetics, wearable and bio-computing, multisensory communication etc. will be discussed within this field.

5. Zero Culture LIFE:The crucial asset of our existence, yet one that is too often left unlived, while we are busy rushing towards the future. Slow down, start from scratch. Forget cultural and historical biases. Search for new imaginaries. Incite new perspectives, ideas and approaches to meaningful experiences in our lives. Grow your own worlds.

mk nov 2000

#

sutChwon

lagging behind, and skipping ahead

(brief and somewhat distracted musings concerning the nature of nodular transcriptronic pulse mechanic space inverters, amongst other things)

sutChwon = n[tp]M

= subject to change without notice

[authors note: this piece has emerged from discussions before, during and after the closing the loop sessions in 02.2000eV. it deals with a protocol/system and its implementation in the present tense, even though it is still firmly in the realm of speculation, rather than active development. any comments, feedback, abuse, patent violations and|or code would be most appreciated [hermes@phl.cx]]

performance, collaboration and synchronisation

This article is essentially a digression into developments of various ideas that are either half baked, half hearted or half finished, none the less, worth pursuing. an attempt at describing a glue layer between indeterminate components. a framework for developing frameworks. another thing that can go wrong in a fragile, indeterminate system. it describes a system or series of protocols which may simply be described as facilitating collaboration between networked participants. it should operate in real time, delayed time or stuttering network time, enabling synchronisation, but not depending upon it. it should be robust enough to maintain communication and exchange of data in unstable situations, learn how to interact with unknown or new systems and run with a range of hardware, software and human configurations. it should be decentralised (peer-to-peer), adaptable, flexible. above all, it should focus on doing what computers do well (crunching data) and as a result enabling the people who are using it do what they do well (whatever that may be).

it is more or less a given that different groups and individuals work in different ways in collaborative situations. there are a range of modes of production, interaction and even (perhaps most importantly) modes of explaining “how?”, “what?” and “why?”. a filmmaker, for example will approach a project quite differently than a musician, architect or programmer. it is not within the scope of this article to describe any of this in detail, apart from pointing out aspects of a system that should be flexible enough to work with such a range of approaches, and in fact enhance them.

Most of us are using networks in someway or another for the projects discussed, presented or developed during closing the loop. Since there is a range of approaches, methodologies and attitudes to this, it seems appropriate to think of methods which would facilitate connection between remote participants who may be uncertain of what data being sent, how the data would be used ,or why in fact, there is any data at all (since its just a game).

The networked aspect of these projects can frequently be described as a struggle of connections and configurations fuelled by the “ecstasy of communication at a distance”. often, it is the network itself which is the object of fascination, the performer, the stage, the invisible centre of attention focusing geographically dispersed centres of attention.

For the purposes of this text, i’ll refer to the system as “sutChwon”, or alternatively “n[tp]M”, this is of course “subject to change without notice” for want of a more apt title.

connecting disparate elements

There are no standard setups in this area, most of us who are using networks for exchanging sound, video, text, sensor data or pong scores, use a range of equipment and for a range of purposes. the difficulty in establishing useful connections between these disparate elements often increases rapidly with the number of people involved in the setup/network (nodes) and the differences in the setup at each node.

n[tp]M is envisioned as a glue (inter)layer, or alternatively as a tubing set to facilitate communication between a wide range of otherwise uncooperative software, hardware and people. to be useful (and/or useable) it should interface easily with other software, other mechanisms and be easily usable (adaptable?) in a wide range of situations from telerobotic gardening to automated generation of sound from logfiles on a server

most networking technologies present an interface to a specific level of network protocols. eg an ethernet packet sniffer, mail program, web browser etc. sutChwon attempts to provide network abstractions and/or communication on 4 distinct layers (which are, of course, interdependent and non standard).

the network layer; in which the computers exchange, and manage the exchange of packets of data.

the syntactic layer; in which quantifiable information about the data is exchanged. eg. what format the data is in, where it is (and where its going), what protocols are being used, node response times, connection reliability, transmission times, etc. easily crunched numbers.

the interpretation layer; in which the information collected and exchanged on the syntactic layer is analysed and/or organised to be presented in a human readable form. user level interaction with the connected systems would most often occur on this level.

the conversation layer; in which humans talk about guinea pigs (amongst other things).

it is often useful and in fact necessary to be able to access several layers of abstraction from the basic tcp or udp layer, as well as several protocols.

sutChwon provides connections on usually distinct network layers, which may be automatic, or configured as required.

the most common setup i have seen for networked sound exchange in a performance context is an encoder/decoder (most often realencoder or an mpeg server, but more recently quicktime streaming server) a telnet client (for the inevitable troubleshooting and discussion during the performance) and of course the instruments themselves (which may or may not be on the same computer). other notable setups have involved ftp sites, web interfaces, cuseeme, email and unfortunately MIDI. things become rapidly more involved with the inclusion of visual material, control data or any attempt at synchronisation.

in order to fit between this range of setups, sutChwon should utilise existing technologies, such as XML or MIME (or http headers based on MIME types) and be capable of implementing diverse protocols like OpenSoundControl or telnet. since it will be used in a range of existing systems it must be able to interact with them with as little modification to those systems as possible, while building on their current strengths.

automation will save us all

a substantial amount of information about network activity, data exchange and interconnection of parts can be obtained automatically and presented in a unified manner (hopefully at an appropriate level of detail). the system should be as automatable as possible, so it can be automated as required (which would depend on its context). for example, having a graphic display of who and what is connected to the network, what sources they are using/providing and how reliable and fast (slow!) particular connections are, should make such things easier to set up, and easier to use from both a technical and creative point of view. having the system respond to particular events, or changes in the network should make a variety of dynamic generative nodes possible.

since the system is flexible and modular, this information can be displayed and used in a variety of ways, and also for a variety of purposes. display and presentation of this collected data is itself an active hci/visualisation problem (again, something beyond the scope of this text). n[tp]M should collect network information in such a way that it can be presented in familiar ways to people with a diverse range of computer skills/uses. however, to be a useful tool there definitely should be useable, readable interfaces to this slippery layer of glue. ideally, such interfaces would enhance existing ones on their own terms (eg. a text based programming interface, or a timeline editing system), so in this sense, it is automation to facilitate both transparency (disappearing into current systems) and wider range of vision (extending the scope and interoperability of the system by increasing what is visible)

describing data

known data formats can be understood using a MIME like mechanism, so they can be dealt with internally, or passed to relevant subsystems/instruments or programs. most media files or data which are exchanged can be described in fairly basic terms, so a common format description method could be developed (if it hasn’t been done already) to describe unknown formats, or extend n[tp]M. something along the lines of XML documents, to describe things like headers and byte ordering with some human understandable description, could be used.

the main purpose of this format for data description is to enable otherwise unreadable data to be used. this makes it possible for participants in the network to exchange data without necessarily knowing if the other participants can understand it. a consequence of having an interconnected network, is that if one node on the network can describe a data format, these descriptions can be shared with any nodes requiring them.

describing protocols

explaining how protocols are implemented in a generalized manner, or in a manner in which it can be handled by another program is a slightly trickier problem, but essentially dealt with in a similar way. a description of the protocol is passed to n[tp]M, which can choose to deal with it internally (if it has the capacity), send data to external programs or a combination of the two. for example, web based interface to a synth, with the form fields being assigned to controls on the synth. on another level, the html tags could be used to create sounds, or inversely, the synth output could be used to generate html data which is sent to a server by an external ftp program.

since a protocol is essentially a description of a state machine and the magic words to change it, or elicit a response from it, protocol descriptions could be implemented (at least skeletally) in some kind of finite state machine emulator which has assignable connections to other software.

protocol negotiation protocol

in order to be able to exchange data either as a single meaningful lump, or by using a specific protocol, both the sender and receiver need to be able to interpret this data, or dataflow. the traditional response to data arriving in an unknown format can usually fit into 2 categories 1) output junk 2) crash.

the n[tp]M model would facilitate the exchange of unknown, or arbitrary data with arbitrary protocols by establishing a protocol for the exchange of abstract descriptions of formats and protocols.

pnp is used when a n[tp]M node cant understand, interpret, or in fact do anything with a stream of data it is about to receive.

it seems there are 3 ways to handle data which is being sent in an unknown format, or using an unknown protocol. (in order of difficulty)

- display it anyway, using another protocol

- exchange information about the format/protocol, then proceed.

- try to learn the protocol (eg. challenge/response sequences)

of course there are the implicit 4th and 5th options - ignore it and crash. neither of which im intending on implementing (except possibly the ‘ignore’ option thru connecting data source to a /dev/null abstraction)

in order to establish an exchange of data that will be intelligible as possible to both the sender and receiver, the nodes go thru a series of query/response cycles in which they tell each other what types of data they can currently handle, what data is about to be sent and what protocols are mutually understandable. if one node cant currently handle the data or protocol, it will either request a description from another node, and attempt to implement a mechanism for handling the requested data, or ask the sender to switch to another format (or protocol) if possible. if a reliable channel cant be established, each node will attempt to exchange data using the most compatible methods possible (which would have varying degrees of legibility) and possibly filter out known incompatibilites between the formats (control characters for example).

there should be a range of methods for enhancing the reliability of pnp, a simple method would involve registries of known data formats, protocols, interoperabilty matrices and known conflicts. a more complex method could use machine learning techniques to analyse exchanges (or read rfcs) and, with some human feedback, build templates, or translators which would be made available to the network.

development(s)

since a working version of sutChwon involves the development of several interdependent parts to function as described, it may take some time before it becomes particularly useful. however, one of its advantages is that its usefulness is enhanced by a diverse network of interconnected parts, and the more diverse the parts, the more useful it becomes.

active development will consist of four main parts,

- formalising a specification of the protocols and description formats

- writing a basic reference implementation

- incorporating feedback from potential use(r)s to determine the accuracy and scope of the specification and the implementation.

- extensive testing in a wide range of existing systems

while each of these aspects is somewhat self contained, they are all absolutely necessary for n[tp]M to function in networks that are subject to change without notice.

ng oct 2000

Authors:

Alex Barth, Tim Boykett, Nik Gaffney, Maja Kuzmanovic and the usual suspects